What’s next in Google AI infrastructure: Scaling for the agentic era

Amin Vahdat

SVP and Chief Technologist, AI and Infrastructure

Mark Lohmeyer

VP and GM, AI and Computing Infrastructure

AI is evolving from answering questions to reasoning and taking action. Companies who want to lead in today’s agentic era require computing infrastructure designed and optimized for these new requirements. Today at Google Cloud Next, we are introducing new AI infrastructure capabilities that help you innovate faster, deliver compelling user and customer experiences, and optimize for cost and energy efficiency — all at massive scale.

The shift to agentic intelligence

In the agentic era, a single intent triggers a chain reaction. Unlike chat, a primary AI agent decomposes goals into specific tasks for a fleet of specialized agents that then collaborate, preserve state, and use reinforcement learning to deliver outcomes in real-time.

This process scales intelligence per interaction, but also creates complexity that yesterday’s architectures cannot support without spiraling costs or performance bottlenecks. To scale efficiently and effectively, you must move beyond manually integrating fragmented components and technologies. To deliver agentic experiences that are smart, fast, scalable, and cost-effective, you need a unified infrastructure stack that spans purpose-built hardware, open software, and flexible consumption models.

Google’s AI Hypercomputer is AI-optimized infrastructure built for the agentic era, engineered to deliver on these new requirements. This is the same foundation that powers Google’s flagship Gemini models, consumer AI services, and enterprise AI offerings. Today, we are announcing a significant expansion of our AI infrastructure portfolio, including:

-

TPU 8t and TPU 8i, our eighth generation TPUs

-

A5X bare metal instances, powered by NVIDIA Vera Rubin NVL72

-

Axion N4A VMs, powered by our custom Axion Arm-based CPUs

-

Google Compute Engine 4th generation VMs, powered by Intel and AMD x86-based CPUs

-

Virgo Network, our breakthrough data center fabric for AI workloads

-

Google Cloud Managed Lustre, a high-performance parallel file system

-

Z4M VMs with high-capacity local SSD storage and RDMA for open parallel file systems

-

Dedicated KV Cache scalable storage subsystem

-

Native PyTorch support for TPUs

-

New Google Kubernetes Engine (GKE) capabilities for agent-native workload orchestration

Taken together, these capabilities will help you accelerate the development of models and complex agentic workflows to accelerate innovation, and deliver useful, responsive services to customers, all while reducing costs and using energy responsibly at scale.

Let’s take a closer look.

Introducing our eighth-generation TPU systems, purpose-built for agentic AI

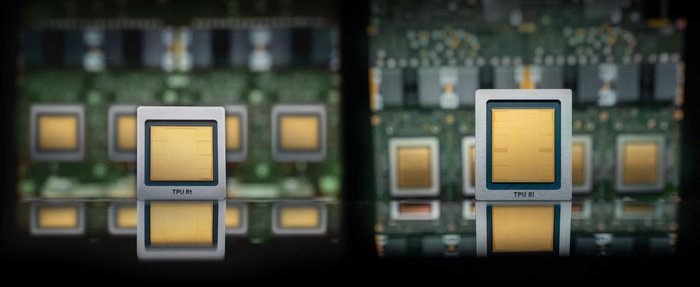

Today, we’re pleased to announce the eighth generation of our Tensor Processing Units (TPUs) , which for the first time includes two distinct chips and specialized systems, engineered specifically for the agentic era.

TPU 8t is our training powerhouse, specifically designed for high-throughput AI workloads. It redefines the scale of AI development, delivering nearly 3x higher compute performance than previous generations to shrink training timelines for massive models. It packs 9,600 chips in a single superpod to provide 121 exaflops of compute and two petabytes of shared memory connected through high-speed inter-chip interconnects (ICI). This massive pool of compute, unified memory, and doubled ICI bandwidth helps ensure that even the most complex models achieve near-linear scaling and maximum system utilization. We can now turn months of training into weeks with the power of 1 million+ TPU chips in a single cluster, orchestrated by Pathways and JAX.

TPU 8i is our breakthrough reasoning system for inference and reinforcement learning (RL), engineered to deliver the ultra-low latency required by agentic workflows and Mixture of Experts (MoE) models. By tripling on-chip SRAM to 384 MB and increasing high-bandwidth memory (HBM) to 288 GB, it breaks the memory wall, hosting massive KV Caches entirely on silicon. Additionally, it doubles ICI bandwidth to 19.2 Tb/s, reduces the ICI network diameter by over 50%, and introduces a dedicated Collectives Acceleration Engine (CAE), which reduces on-chip latency by up to 5x to minimize lag during high-concurrency requests. With this design, TPU 8i delivers 80% better performance per dollar for inference than the prior generation, enabling fast, interactive user experiences, cost-effectively.

TPU 8t and TPU 8i will be available to Cloud customers soon. To learn more, check out this deep dive on the architecture .

A5X with NVIDIA Vera Rubin platform

We know that one size doesn't fit all. Different customers have different workloads, different requirements, and different use cases. So, we also partner deeply with NVIDIA to deliver the latest GPU platforms as highly reliable and scalable services in Google Cloud. We will be among the first to deliver instances based on the next-generation Vera Rubin platform when it becomes available later this year.

We are also co-engineering the open-source Falcon networking protocol with NVIDIA via the Open Compute Project, pushing the frontiers of reliable transport protocols. A5X will implement a variety of innovative concepts from Falcon.

Thinking Machine Labs, for example, uses our NVIDIA-based infrastructure to power Tinker, an open platform for reinforcement learning and fine-tuning of frontier models for specialized use cases, achieving over 2x faster training and serving with Google’s AI Hypercomputer.

Fueling agentic logic and reinforcement learning with Axion, Intel, and AMD

While GPUs and TPUs are great for training and serving AI models, they need to be complemented with high-performance CPU-based services to handle the complex logic, tool-calls, and feedback loops that surround the core AI model. Our new Axion-powered N4A CPU instances deliver outstanding price-performance for these agent runtimes. In fact, GKE Agent Sandbox with Google Axion N4A offers up to 30% better price-performance than agent workloads on other hyperscalers. This efficiency extends across our entire portfolio, including our 4th generation Compute Engine VM families, powered by the latest x86 instances from Intel and AMD. These are specifically optimized for the broadest range of RL tasks, such as RL reward calculation, agent orchestration, and nested visualization, providing the optimal capabilities for every AI workload.

Virgo Network for data center scale-out fabric

As part of AI Hypercomputer, the Virgo Network is designed to meet the demanding requirements of modern large-scale AI workloads. Its collapsed fabric architecture with 4x the bandwidth of previous generations eliminates the "scaling tax" to deliver staggering peak computing power. This capacity helps the most ambitious AI workloads scale with near-linear efficiency.

With Virgo Network and TPU 8t, we can connect 134,000 TPUs into a single fabric in a single data center, and connect more than one million TPUs across multiple data center sites into a training cluster — essentially transforming globally distributed infrastructure into one seamless supercomputer.

We are also making Virgo Network available for A5X (powered by NVIDIA Vera Rubin NVL72), supporting up to 80,000 GPUs in a single data center, and up to 960,000 GPUs across multiple sites.

Storage: Minimizing data bottlenecks

A massive compute cluster is only as effective as the storage system feeding it data. To ensure storage is not a bottleneck while making compute faster, we are delivering four key storage advancements that let you:

-

Accelerate training and inference: Google Cloud Managed Lustre now delivers 10 TB/s of bandwidth — a 10x improvement over last year and up to 20x faster than other hyperscalers. We’ve also increased its capacity to 80 petabytes. These advancements are powered by our new C4NX instances and Hyperdisk Exapools.

-

Minimize latency: Managed Lustre can leverage new TPUDirect and RDMA to allow data to bypass the host, moving directly to the accelerators. By removing this processing overhead, your AI agents can respond with the near-instant speed users need.

-

Maintain peak utilization for training: Rapid Buckets on Google Cloud Storage transforms object storage with sub-millisecond latency and 20 million operations per second. This helps ensure large-scale training checkpoints and recoveries happen near-instantly, allowing your accelerators to maintain 95% utilization or higher, accelerating training cycles, while also providing cost-effective utilization of valuable TPUs and GPUs.

-

Build custom solutions: For ISVs and organizations that want to build storage solutions, we are launching the Z4M instance, specifically engineered for customers who want to integrate trusted parallel file systems like Vast Data or Sycomp. Each Z4M instance scales to a massive 168 TiB of local SSD capacity and can be deployed in RDMA clusters of thousands of machines.

These new storage options provide a comprehensive storage portfolio, giving you the raw power of the AI Hypercomputer stack with optimal storage services for each use-case.

GKE: Orchestration for agent-native workloads

In the agentic era, intelligence is only as effective as the speed at which it can be scaled. So, we’ve transformed GKE to serve as the premier orchestration engine for agent-native workloads.

Reducing latency across the stack

To support responsive agentic responses, we optimize every millisecond of the start-up and scale-out process. By streamlining how infrastructure responds to surges in demand, GKE ensures that your agents are ready the moment a user engages with the system. New in GKE are:

-

Accelerated node and pod startup: GKE nodes now start up to 4x faster, while pod startup times have been slashed by up to 80%.

-

Rapid model loading: Leveraging the run:AI Model Streamer and Rapid Cache in Google Cloud Storage, models now load 5x faster, removing a traditional storage bottleneck.

Intelligent routing with AI-powered Inference Gateway

Building on last year's introduction of GKE Inference Gateway, we are using "AI for AI" to solve the complexities of serving at scale.

Inference Gateway’s new predictive latency boost replaces heuristic guesswork with machine learning-driven, real-time capacity-aware routing. This intelligent orchestration cuts time-to-first-token (TTFT) latency by more than 70% without manual tuning. For businesses, this translates directly into more natural voice conversations and smooth, real-time interactions across a range of use cases.

Inference Gateway can be deployed alongside llm-d, a Kubernetes-native high-performance distributed LLM inference framework, which was recently accepted as a Cloud Native Computing Foundation (CNCF) Sandbox project. Google Cloud is proud to be a founding contributor to llm-d alongside Red Hat, IBM Research, CoreWeave, and NVIDIA, uniting around a clear, industry-defining vision: any model, any accelerator, any cloud.

Open software ecosystem for the full AI lifecycle

Hardware reaches its full potential through co-designed software. AI Hypercomputer enables engineers to move faster by providing native, optimized support for the industry’s most popular frameworks, including JAX, PyTorch, and vLLM. This open software layer reduces friction between development and deployment, translating to faster time-to-market and better resource efficiency.

We are now in preview with select customers with native PyTorch support for TPU, which we call TorchTPU. With TorchTPU, you can run models on TPUs as they are, with full support for native PyTorch features like Eager Mode. When you combine this with our robust support of vLLM on TPU, our message is clear: we always focus on building for openness and customer choice.

Your foundation for agentic growth

To innovate quickly and cost-effectively in the agentic era, you need a unified system that doesn’t compromise on performance or choice. That is exactly what AI Hypercomputer delivers. By co-designing every layer — from the silicon to the software — we remove the integration burden so your teams can focus on driving your business forward.

AI Hypercomputer also serves as the powerful foundation for Google’s entire ecosystem of high-level services. This integrated stack powers everything from Gemini Enterprise to the Gemini Enterprise Agent Platform , ensuring that all these infrastructure innovations translate directly into business value. By leveraging our fully managed services, such as our serverless training service and our new Managed RL API, you can apply AI Hypercomputer’s massive performance gains to customize Gemini with your own business logic, delivering sophisticated, agent-based solutions.

We’re looking forward to seeing what you build next with this updated and expanded AI platform.