New innovations in Google Distributed Cloud

Muninder Sambi

VP, Google Distributed Cloud

Today at Google Cloud Next , we’re announcing new capabilities in Google Distributed Cloud (GDC) that bring Gemini and our advanced AI stack to wherever your data is, so you don’t need to compromise between AI innovation and sovereignty. This will serve as a catalyst for a sovereign neocloud architecture.

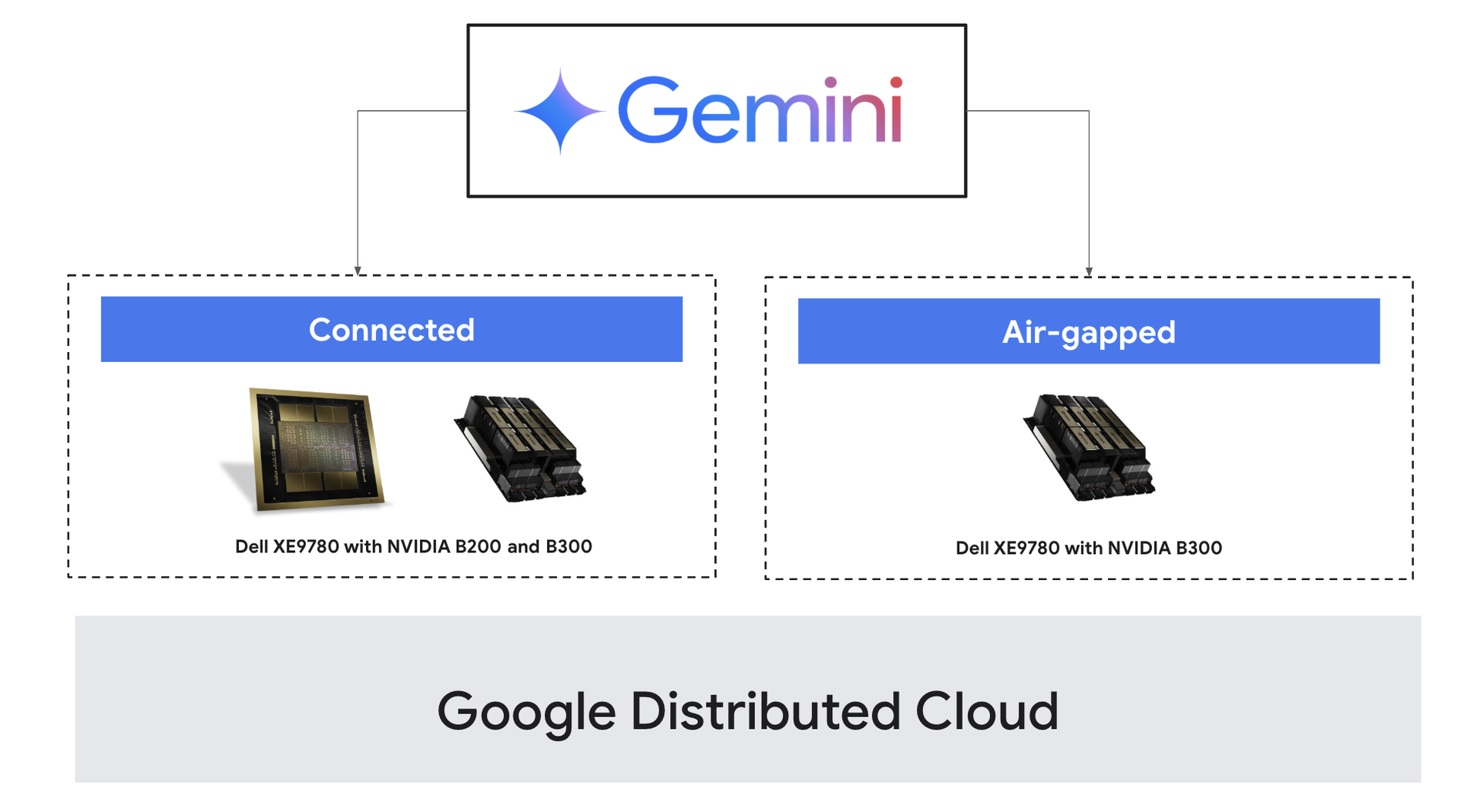

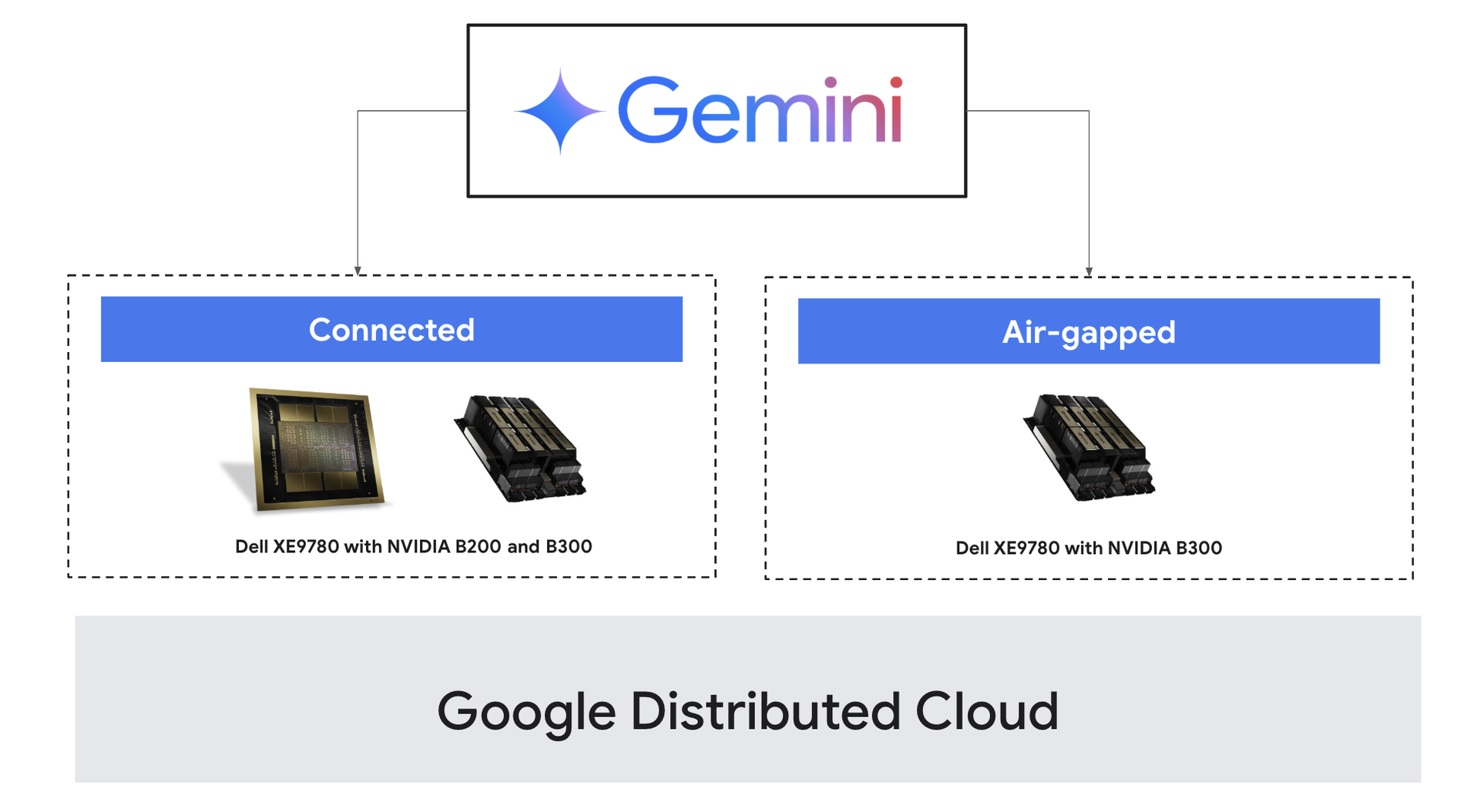

GDC brings Google Cloud to wherever you need it — in your own data center or at the edge. It is offered as two distinct models to meet your specific security and hardware requirements: GDC air-gapped , a fully disconnected deployment that runs on purpose-built, Google-supplied hardware designed for maximum security and compliance; and GDC connected , where you benefit from an integrated, Google-managed software lifecycle on your own hardware.

Traditionally, enterprises and governments with strict data regulatory and sovereignty requirements, were locked out of the latest AI capabilities. Their only choice was to build their own systems, which is slow, complicated, and expensive. GDC ends that struggle. You get world-class AI innovation in your own premises without the toil.

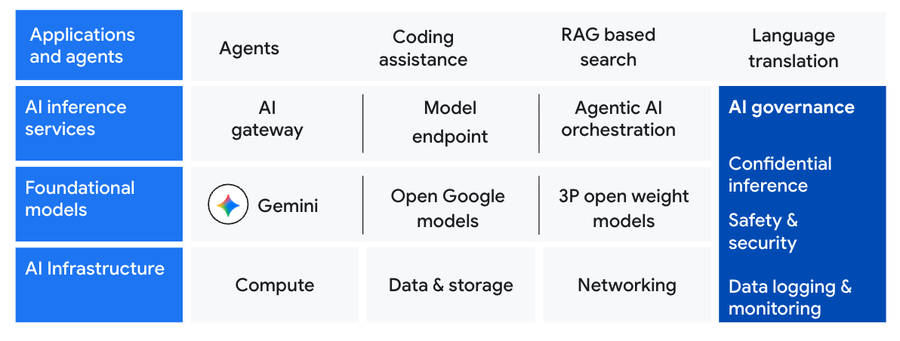

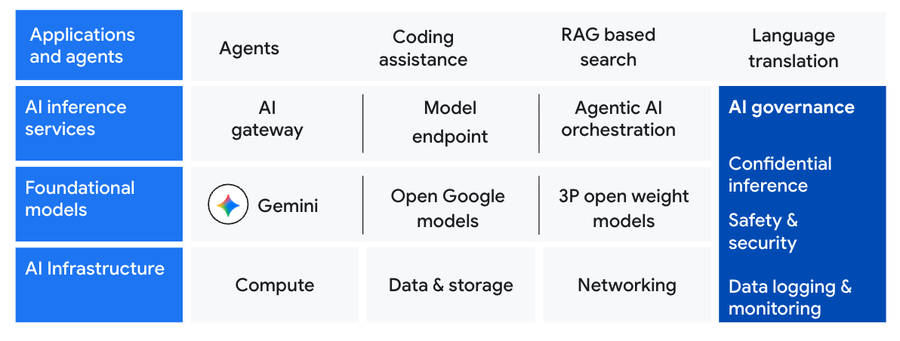

GDC delivers a complete, on-premises AI solution: managed infrastructure optimized for AI workloads, a choice of Gemini or open models for flexibility and efficient Inference services that are cost effective. This foundation allows you to build and run secure AI agents and applications while maintaining total control over your data.

Let’s take you through how the new innovations in GDC come together to support your sovereign AI workloads.

Managed AI infrastructure

To support sovereign AI needs on-premises, organizations require managed infrastructure that can handle the massive performance demands of compute, storage, and networking. Because on-premises AI workloads are dynamic and unpredictable, we are introducing new infrastructure innovations that deliver peak performance across a variety of requirements:

-

NVIDIA Blackwell GPUs : Accelerate AI performance with NVIDIA Blackwell (NVIDIA HGX B200) and NVIDIA Blackwell Ultra platforms (NVIDIA HGX B300) GPUs, leveraging 5th-gen NVIDIA NVLink to deliver data-center scale bandwidth directly to your environment

-

Google Cloud machine families : GDC already supports the N2 and N3 machine families for general-purpose workloads, and now it supports the new A4 machine family delivering a 2.25x increase in peak compute to handle demanding inference tasks. We’re also bringing the memory-optimized M2 and M3 machine families to GDC for workloads like ERP and data analytics that require higher memory-to-vCPU ratios.

-

Enhanced storage scale and performance : GDC now supports 6PB object storage per zone (as compared to 1PB earlier) — 6x the previous storage capacity. In addition, it now offers 30 IOPS/GB (as compared to 3 IOPS/GB earlier) per zone, a 10x performance boost, minimizing storage bottlenecks.

Foundational models in your data center

With GDC, you can bring the power of Google’s flagship Gemini models directly into your environment, bridging the gap between world-class generative AI and strict data sovereignty by enabling native deployment within your own perimeter, now powered by the latest generation NVIDIA Blackwell GPUs.

Today, we are excited to announce that the latest Gemini Flash models are now available (in preview) on the NVIDIA Blackwell and Blackwell Ultra Platforms for GDC connected customers, joining our existing support for GDC air-gapped customers.

"Deploying Gemini on Google Distributed Cloud has significantly improved our global manufacturing. Running frontier AI locally allows us to analyze IoT data for real-time predictive maintenance and quality control, avoiding cloud latency. We maintain strict data sovereignty over our IP while retaining cloud-like agility." - Junhee Lee, CEO , Samsung SDS

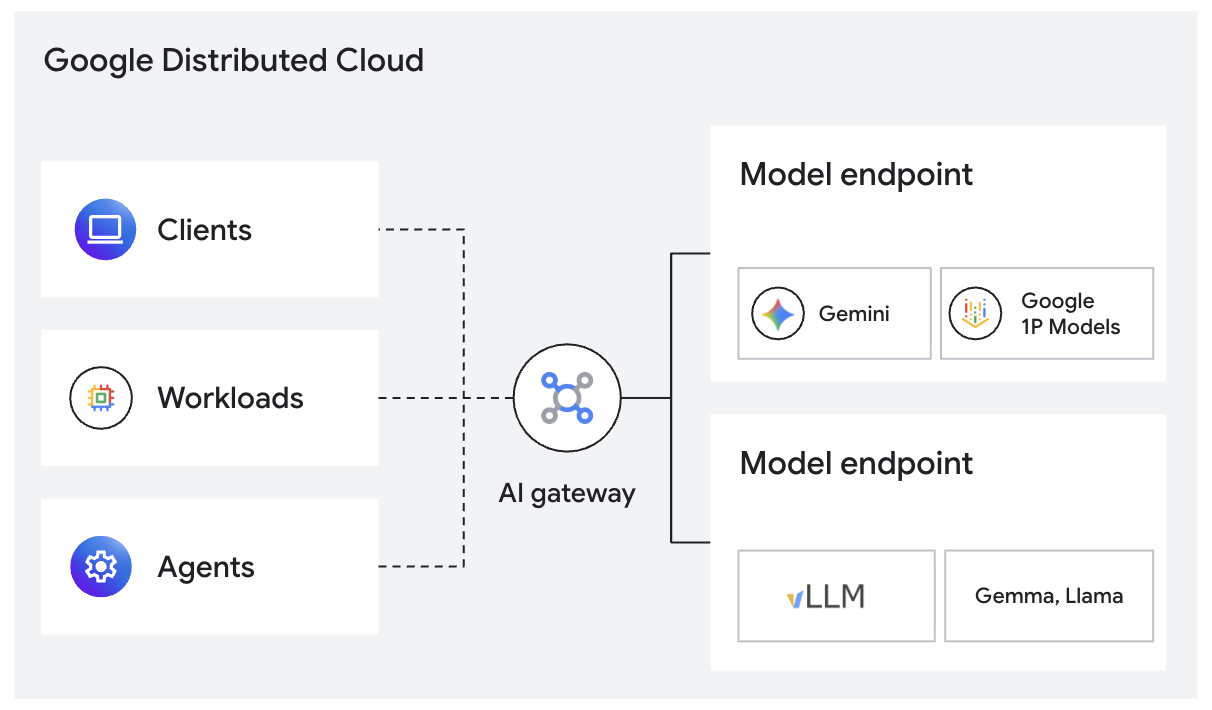

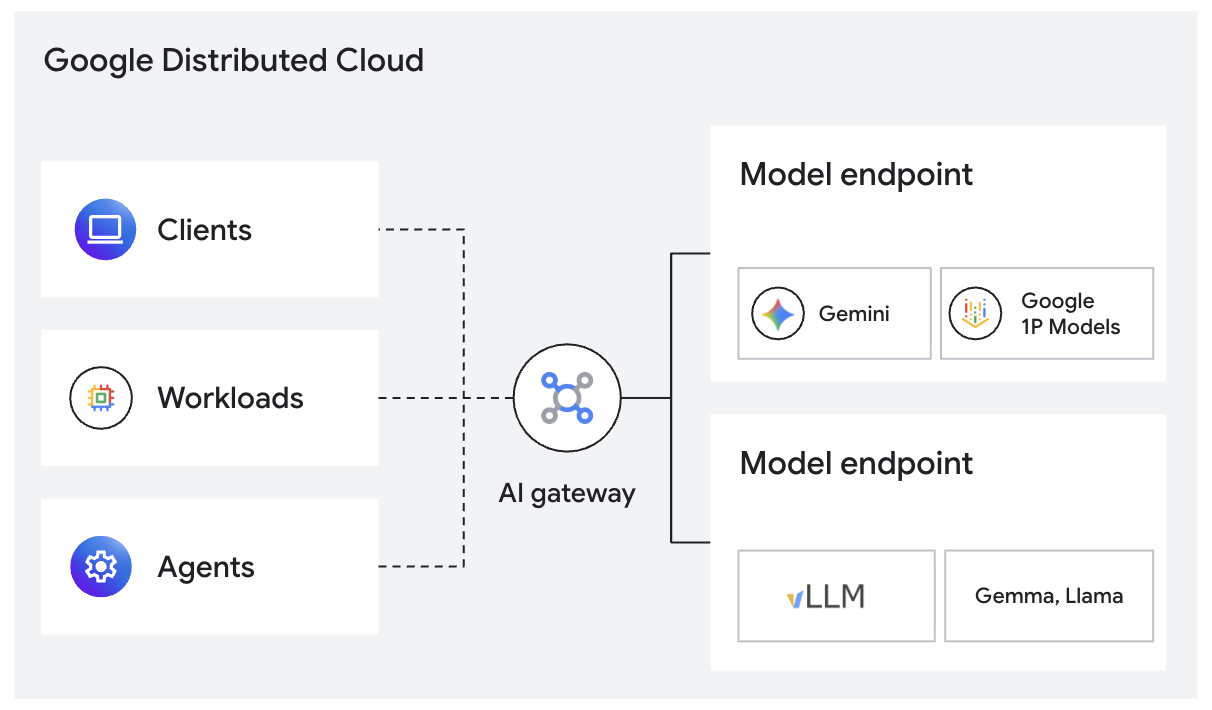

AI Inferencing services: Introducing Google Distributed Cloud AI gateway

To optimize performance and abstract infrastructure complexity, we are introducing the AI gateway for sovereign environments . This intelligent middleware acts as the control plane for your models. This provides:

-

Dynamic request routing: Automatically routes inference requests to the right AI model based on cost, latency, and accuracy, rather than on hard-coded logic.

-

Intelligent load balancing : Routes requests for optimized inference efficiency, picking GPUs based on their utilization.

-

Quota management: Prioritizes requests to ensure high-priority applications receive required throughput, and meet quota management goals.

- Observability: Built-in tracing and logging for every inference call, helping ensure auditability for compliance-heavy environments.

Agentic AI applications and agents

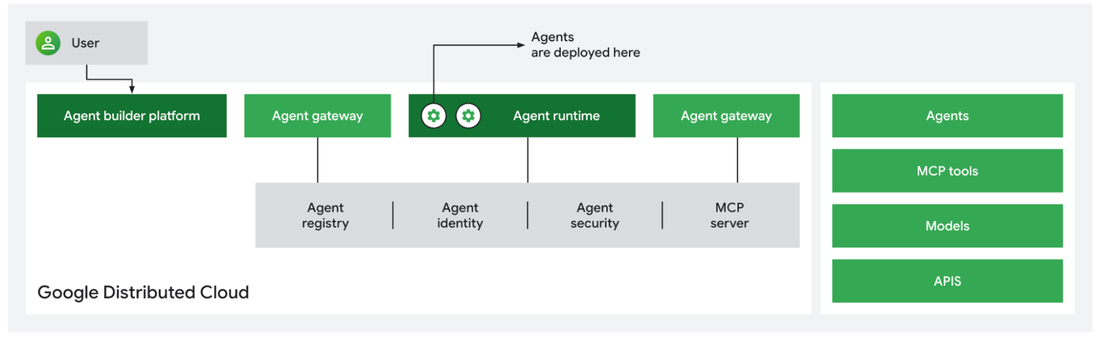

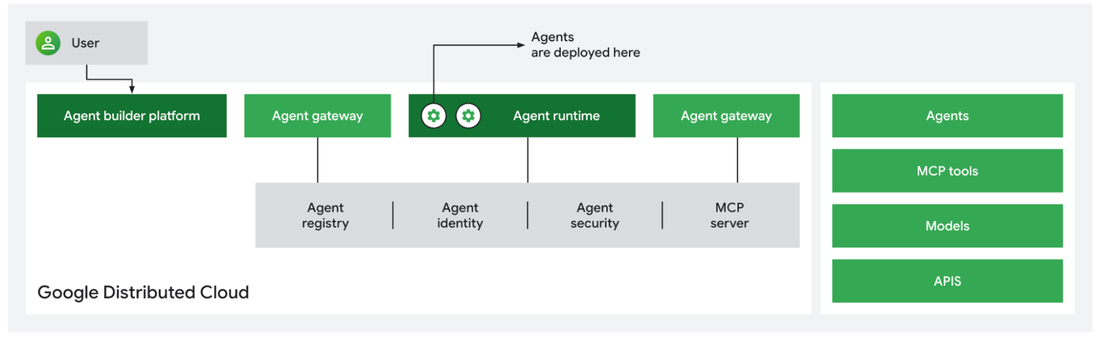

To truly operationalize AI at the edge, organizations need more than just foundational models. They need autonomous, secure agents built on an agentic AI architecture that can take action. We are thrilled to announce a new sovereign agentic AI architecture for Google Distributed Cloud . Built with 3rd party providers on Kubernetes, this architecture helps to ensure that your agentic workflows execute entirely within your secure Customer Organization boundary.

Using this agentic architecture, you can build and deploy powerful AI agents for agentic tasks like development, coding or data analysis all within your secure perimeter.

AI anywhere with Google Distributed Cloud

We believe GDC is the best platform to serve Google and other models on-prem, connected and air-gapped, enabling all customers to leverage AI and agentic solutions, without compromising on sovereignty. To learn more about these product offerings, visit our website . The innovations we discussed here deliver the flexibility and security required for the sovereign AI era. To see them in action, join our GDC breakout sessions or the Showcase at Next ’26 .