If your organization uses Shared VPC, you can set up a Serverless VPC Access connector in either the service project or the host project. This guide shows how to set up a connector in the host project.

If you need to set up a connector in a service project, see Configure connectors in service projects . To learn about the advantages of each method, see Connecting to a Shared VPC network .

Before you begin

-

Check the Identity and Access Management (IAM) roles for the account you are currently using. The active account must have the following roles on the host project:

-

Select the host project in your preferred environment.

Console

-

Open the Google Cloud console dashboard.

-

In the menu bar at the top of the dashboard, click the project dropdown menu and select the host project.

gcloud

Set the default project in the gcloud CLI to the host project by running the following in your terminal:

gcloud config set project HOST_PROJECT_ID

Replace the following:

-

HOST_PROJECT_ID: the ID of the Shared VPC host project

Create a Serverless VPC Access connector

To send requests to your VPC network and receive the corresponding responses, you must create a Serverless VPC Access connector. You can create a connector by using the Google Cloud console, Google Cloud CLI, or Terraform:

Console

-

Enable the Serverless VPC Access API for your project.

-

Go to the Serverless VPC Access overview page.

-

Click Create connector.

-

In the Namefield, enter a name for your connector. The name must follow the Compute Engine naming convention and be less than 21 characters. Hyphens (

-) count as two characters. -

In the Regionfield, select a region for your connector. This must match the region of your serverless service.

If your service is in the region

us-centraloreurope-west, useus-central1oreurope-west1. -

In the Networkfield, select the VPC network to attach your connector to.

-

Click the Subnetworkpulldown menu:

Select an unused

/28subnet.- Subnets must be used exclusively by the connector. They cannot be used by other resources such as VMs, Private Service Connect, or load balancers.

- To confirm that your subnet is not used for

Private Service Connect or Cloud Load Balancing, check that

the subnet

purposeisPRIVATEby running the following command in the gcloud CLI:gcloud compute networks subnets describe SUBNET_NAMESUBNET_NAMEwith the name of your subnet.

-

(Optional) To set scaling options for additional control over the connector, click Show Scaling Settingsto display the scaling form.

- Set the minimum and maximum number of instances for your connector,

or use the defaults, which are 2 (min) and 10 (max). The

connector scales out to the maximum specified as traffic increases,

but the connector does not scale back in when traffic decreases

. You

must use values between

2and10, and theMINvalue must be less than theMAXvalue. - In the Instance Typepulldown menu, choose the machine type to be used for the

connector, or use the default

e2-micro. Notice the cost sidebar on the right when you choose the instance type, which displays bandwidth and cost estimations.

- Set the minimum and maximum number of instances for your connector,

or use the defaults, which are 2 (min) and 10 (max). The

connector scales out to the maximum specified as traffic increases,

but the connector does not scale back in when traffic decreases

. You

must use values between

-

Click Create.

-

A green check mark will appear next to the connector's name when it is ready to use.

gcloud

-

Update

gcloudcomponents to the latest version:gcloud components update

-

Enable the Serverless VPC Access API for your project:

gcloud services enable vpcaccess.googleapis.com

-

Create a Serverless VPC Access connector:

gcloud compute networks vpc-access connectors create CONNECTOR_NAME \ --region = REGION \ --subnet = SUBNET \ --subnet-project = HOST_PROJECT_ID \ # Optional: specify minimum and maximum instance values between 2 and 10, default is 2 min, 10 max. --min-instances = MIN \ --max-instances = MAX \ # Optional: specify machine type, default is e2-micro --machine-type = MACHINE_TYPE

Replace the following:

-

CONNECTOR_NAME: a name for your connector. The name must follow the Compute Engine naming convention and be less than 21 characters. Hyphens (-) count as two characters. -

REGION: a region for your connector; this must match the region of your serverless service. If your service is in the regionus-centraloreurope-west, useus-central1oreurope-west1. -

SUBNET: the name of an unused/28subnet.- Subnets must be used exclusively by the connector. They cannot be used by other resources such as VMs, Private Service Connect, or load balancers.

- To confirm that your subnet is not used for

Private Service Connect or Cloud Load Balancing, check

that the subnet

purposeisPRIVATEby running the following command in the gcloud CLI:gcloud compute networks subnets describe SUBNET_NAME-

SUBNET_NAME: the name of your subnet

-

-

HOST_PROJECT_ID: the ID of the host project -

MIN: the minimum number of instances to use for the connector. Use an integer between2and9. Default is2. To learn about connector scaling, see Throughput and scaling . -

MAX: the maximum number of instances to use for the connector. Use an integer between3and10. Default is10. If traffic requires it, the connector scales out to[MAX]instances, but does not scale back in . To learn about connector scaling, see Throughput and scaling . -

MACHINE_TYPE:f1-micro,e2-micro, ore2-standard-4. To learn about connector throughput, including machine type and scaling, see Throughput and scaling .

For more details and optional arguments, see the

gcloudreference . -

-

Verify that your connector is in the

READYstate before using it:gcloud compute networks vpc-access connectors describe CONNECTOR_NAME \ --region = REGION

Replace the following:

-

CONNECTOR_NAME: the name of your connector; this is the name that you specified in the previous step -

REGION: the region of your connector; this is the region that you specified in the previous step

The output should contain the line

state: READY. -

Terraform

You can use a Terraform resource

to enable the vpcaccess.googleapis.com

API.

You can use Terraform modules to create a VPC network and subnet and then create the connector.

Enable Cloud Run for the service project

Enable the Cloud Run API for the service project. This is necessary for adding IAM roles in subsequent steps and for the service project to use Cloud Run.

Console

-

Open the page for the Cloud Run API.

-

In the menu bar at the top of the dashboard, click the project dropdown menu and select the service project.

-

Click Enable.

gcloud

Run the following in your terminal:

gcloud services enable run.googleapis.com --project= SERVICE_PROJECT_ID

Replace the following:

-

SERVICE_PROJECT_ID: the ID of the service project

Provide access to the connector

Provide access to the connector by granting the service project Cloud Run Service Agent the Serverless VPC Access UserIAM role on the host project.

Console

-

Open the IAM page.

-

Click the project dropdown menu and select the host project.

-

Click Add.

-

In the New principalsfield, enter the email address of the Cloud Run Service Agent for the Cloud Run service:

service- SERVICE_PROJECT_NUMBER @serverless-robot-prod.iam.gserviceaccount.com

Replace the following:

-

SERVICE_PROJECT_NUMBER: the project number associated with the service project. This is different than the project ID. You can find the project number on the service project's Project Settings page in the Google Cloud console.

-

-

In the Rolefield, select Serverless VPC Access User.

-

Click Save.

gcloud

Run the following in your terminal:

gcloud projects add-iam-policy-binding HOST_PROJECT_ID \ --member=serviceAccount:service- SERVICE_PROJECT_NUMBER @serverless-robot-prod.iam.gserviceaccount.com \ --role=roles/vpcaccess.user

Replace the following:

-

HOST_PROJECT_ID: the ID of the Shared VPC host project -

SERVICE_PROJECT_NUMBER: the project number associated with the service account. This is different than the project ID. You can find the project number by running the following:gcloud projects describe SERVICE_PROJECT_ID

Make the connector discoverable

On the host project's IAM policy, you must grant the following two predefined roles to the principals who deploy Cloud Run services:

- Serverless VPC Access Viewer (

vpcaccess.viewer) : Required. - Compute Network Viewer (

compute.networkViewer) : Optional but recommended. Allows the IAM principal to enumerate subnets in the Shared VPC network.

Alternatively, you can use custom roles or other predefined roles that

include all the permissions of the Serverless VPC Access Viewer

( vpcaccess.viewer

) role.

Console

-

Open the IAM page.

-

Click the project dropdown menu and select the host project.

-

Click Add.

-

In the New principalsfield, enter the email address of the principal that should be able to see the connector from the service project. You can enter multiple emails in this field.

-

In the Rolefield, select both of the following roles:

- Serverless VPC Access Viewer

- Compute Network Viewer

-

Click Save.

gcloud

Run the following commands in your terminal:

gcloud projects add-iam-policy-binding HOST_PROJECT_ID \ --member= PRINCIPAL \ --role=roles/vpcaccess.viewer gcloud projects add-iam-policy-binding HOST_PROJECT_ID \ --member= PRINCIPAL \ --role=roles/compute.networkViewer

Replace the following:

-

HOST_PROJECT_ID: the ID of the Shared VPC host project -

PRINCIPAL: the principal who deploys Cloud Run services. Learn more about the--memberflag .

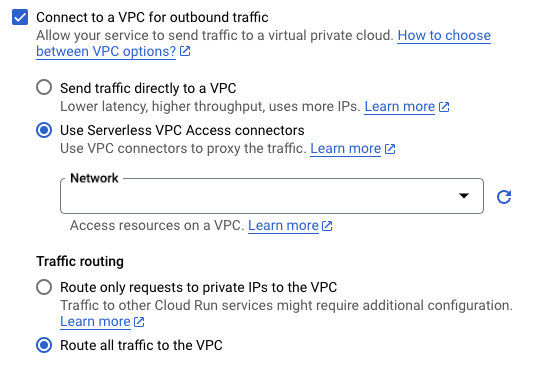

Configure your service to use the connector

For each Cloud Run service that requires access to your Shared VPC, you must specify the connector for the service. You can specify the connector by using the Google Cloud console, Google Cloud CLI, YAML file or Terraform when deploying a new service or updating an existing service.

Console

-

In the Google Cloud console, go to Cloud Run:

-

Select Servicesfrom the Cloud Run navigation menu, and click Deploy containerto configure a new service. If you are configuring an existing service, click the service, then click Edit and deploy new revision.

-

If you are configuring a new service, fill out the initial service settings page, then click Container(s), Volumes, Networking, Securityto expand the service configuration page.

-

Click the Connectionstab.

- In the VPC Connector field, select a connector to use or select None to disconnect your service from a VPC network.

-

Click Createor Deploy.

gcloud

-

Set the gcloud CLI to use the project containing the Cloud Run resource:

gcloud config set project PROJECT_ID-

PROJECT_ID: the ID of the project containing the Cloud Run resource that requires access to your Shared VPC. If the Cloud Run resource is in the host project, this is the host project ID. If the Cloud Run resource is in a service project, this is the service project ID.

-

-

Use the

--vpc-connectorflag.

- For existing services:

gcloud run services update SERVICE --vpc-connector = CONNECTOR_NAME

- For new services:

gcloud run deploy SERVICE --image = IMAGE_URL --vpc-connector = CONNECTOR_NAME

-

SERVICE: the name of your service -

IMAGE_URL: a reference to the container image, for example,us-docker.pkg.dev/cloudrun/container/hello:latest -

CONNECTOR_NAME: the name of your connector. Use the fully qualified name when deploying from a Shared VPC service project (as opposed to the host project), for example:projects/ HOST_PROJECT_ID /locations/ CONNECTOR_REGION /connectors/ CONNECTOR_NAME

HOST_PROJECT_IDis the ID of the host project,CONNECTOR_REGIONis the region of your connector, andCONNECTOR_NAMEis the name that you gave your connector.

-

YAML

Set the gcloud CLI to use the project containing the Cloud Run resource:

gcloud config set project PROJECT_ID

Replace the following:

-

PROJECT_ID: the ID of the project containing the Cloud Run resource that requires access to your Shared VPC. If the Cloud Run resource is in the host project, this is the host project ID. If the Cloud Run resource is in a service project, this is the service project ID.

-

If you are creating a new service, skip this step. If you are updating an existing service, download its YAML configuration :

gcloud run services describe SERVICE --format export > service.yaml

-

Add or update the

run.googleapis.com/vpc-access-connectorattribute under theannotationsattribute under the top-levelspecattribute:apiVersion : serving.knative.dev/v1 kind : Service metadata : name : SERVICE spec : template : metadata : annotations : run.googleapis.com/vpc-access-connector : CONNECTOR_NAME name : REVISION

Replace the following:

- SERVICE : the name of your Cloud Run service.

- CONNECTOR_NAME

: the name of your

connector. Use the fully qualified name when deploying from a

Shared VPC service project (as opposed to the host project), for

example:

projects/ HOST_PROJECT_ID /locations/ CONNECTOR_REGION /connectors/ CONNECTOR_NAME

HOST_PROJECT_IDis the ID of the host project,CONNECTOR_REGIONis the region of your connector, andCONNECTOR_NAMEis the name that you gave your connector. - REVISION

with a new revision name or delete it (if present). If you supply a new revision name, it must

meet the following criteria:

- Starts with

SERVICE - - Contains only lowercase letters, numbers and

- - Does not end with a

- - Does not exceed 63 characters

- Starts with

-

Replace the service with its new configuration using the following command:

gcloud run services replace service.yaml

Terraform

You can use a Terraform resource to create a service and configure it to use your connector.

Next steps

- Monitor administrative activities with Serverless VPC Access audit logging .

- Protect resources and data by creating a service perimeter with VPC Service Controls.

- Use network tags to restrict connector VM access to VPC resources .

- Learn about the Identity and Access Management (IAM) roles associated with Serverless VPC Access. See Serverless VPC Access roles in the IAM documentation for a list of permissions associated with each role.

- Learn how to connect to Memorystore .