This topic explains how to view Apigee hybrid metrics in a Stackdriver dashboard.

About Stackdriver

For more information about metrics, dashboards, and Stackdriver see:

Enabling hybrid metrics

Before hybrid metrics can be sent to Stackdriver , you must first enable metrics collection. See Configure metrics collection for this procedure.

About hybrid metric names and labels

When enabled, hybrid automatically populates Stackdriver metrics. The domain name prefix of the metrics created by hybrid is:

apigee.googleapis.com/

For example, the /proxy/request_count

metric contains the total number of requests received

by an API proxy. The metric name in Stackdriver is therefore:

apigee.googleapis.com/proxy/request_count

Stackdriver lets you filter and group metrics data based on labels. Some labels are predefined, and others are added explicitly by hybrid. The Available metrics section below lists all of the available hybrid metrics and any labels added specifically for a metric that you can use for filtering and grouping.

Viewing metrics

The following example shows how to view metrics in Stackdriver:- Open the Monitoring Metrics Explorer in a browser. Alternatively, if you're already in the Stackdriver console, select Metrics explorer.

-

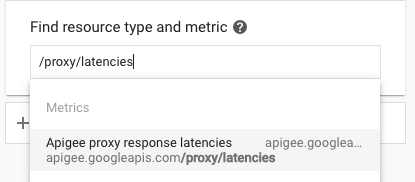

In Find resource type and metric, locate and select the metric you want to examine. Choose a specific metric listed in Available metrics , or search for a metric. For example, search for

proxy/latencies:

- Select the desired metric.

- Apply filters. Filter choices for each metric are listed in Available metrics

.

For example, for the

proxy_latenciesmetric, filter choices are: org= org_name . - Stackdriver displays the chart for the selected metric.

- Click Save.

Creating a dashboard

Dashboards are one way for you to view and analyze metric data that is important to you. Stackdriver provides predefined dashboards for the resources and services that you use, and you can also create custom dashboards.

You use a chart to display an Apigee metric in your custom dashboard. With custom dashboards, you have complete control over the charts that are displayed and their configuration. For more information on creating charts, see Creating charts .

The following example shows how to create a dashboard in Stackdriver and then to add charts to view metrics data:

- Open the Monitoring Metrics Explorer in a browser and then select Dashboards.

- Select + Create Dashboard.

- Give the dashboard a name. For example: Hybrid Proxy Request Traffic

- Click Confirm.

-

For each chart that you want to add to your dashboard, follow these steps:

- In the dashboard, select Add chart.

- Select the desired metric as described above in Viewing metrics .

- Complete the dialog to define your chart.

- Click Save. Stackdriver displays data for the selected metric.

Available metrics

The following tables list metrics for analyzing proxy traffic.

Proxy, target, and server traffic metrics

The Prometheus service collects and processes metrics (as described in Metrics collection ) for proxy, target, and server traffic.

The following table describes the metrics and labels that Prometheus uses. These labels are used in the metrics log entries.

| Metric name | Label | Use |

|---|---|---|

/proxy/request_count

|

method

|

The total number of API proxy requests received. |

/proxy/response_count

|

method

response_code

|

The total number of API proxy responses received. |

/proxy/latencies

|

method

|

Total number of milliseconds it took to respond to a call. This time includes the Apigee API proxy overhead and your target server time. |

/target/request_count

|

method

|

The total number of requests sent to the proxy's target. |

/target/response_count

|

method

|

The total number of responses received from the proxy's target. |

/target/latencies

|

method

|

Total number of milliseconds it took to respond to a call. This time does not include the Apigee API proxy overhead. |

/policy/latencies

|

policy_name

|

The total number of milliseconds that this named policy took to execute. |

/server/fault_count

|

source

|

The total number of faults for the server application. For example, the application could be |

/server/nio

|

state

|

The number of open sockets. |

/server/num_threads

|

The number of active non-daemon threads in the server. | |

/server/request_count

|

method

|

The total number of requests received by the server application. For example, the application could be |

/server/response_count

|

method

|

Total number of responses sent by the server application. For example, the application could be |

/server/latencies

|

method

|

Latency is the latency in millisecs introduced by the server application. For example, the application could be |

/upstream/request_count

|

method

|

The number of requests sent by the server application to its upstream application. For example, for the |

/upstream/response_count

|

method

|

The number of responses received by the server application from its upstream application. For example, for the |

/upstream/latencies

|

method

|

The latency incurred at the upstream server application in milliseconds. For example, for the |

UDCA metrics

The Prometheus service collects and processes metrics (as described in Metrics collection ) for the UDCA service just as it does for other hybrid services.

The following table describes the metrics and labels that Prometheus uses in the UDCA metrics data. These labels are used in the metrics log entries.

/udca/server/local_file_oldest_ts

dataset

state

The timestamp, in milliseconds since the start of the Unix Epoch, for the oldest file in the dataset.

This is computed every 60 seconds and does not reflect the state in real time. If the UDCA is up to date and there are no files waiting to be uploaded when this metric is computed, then this value will be 0.

If this value keeps increasing, then old files are still on disk.

/udca/server/local_file_latest_ts

dataset

state

The timestamp, in milliseconds since the start of the Unix Epoch, for the latest file on disk by state.

This is computed every 60 seconds and does not reflect the state in real time. If the UDCA is up to date and there are no files waiting to be uploaded when this metric is computed, then this value will be 0.

/udca/server/local_file_count

dataset

state

A count of the number of files on disk in the data collection pod.

Ideally, the value will be close to 0. A consistent high value indicates that files are not being uploaded or that the UDCA is not able to upload them fast enough.

This value is computed every 60 seconds and does not reflect the state of the UDCA in real time.

/udca/server/total_latencies

dataset

The time interval, in seconds, between the data file being created and the data file being successfully uploaded.

Buckets will be 100ms, 250ms, 500ms, 1s, 2s, 4s, 8s, 16s, 32s, and 64s.

Histogram for total latency from file creation time to successful upload time.

/udca/server/upload_latencies

dataset

The total time, in seconds, that UDCA spent uploading a data file.

Buckets will be 100ms, 250ms, 500ms, 1s, 2s, 4s, 8s, 16s, 32s, and 64s.

The metrics will display a histogram for total upload latency, including all upstream calls.

/udca/upstream/http_error_count

service

dataset

response_code

The total count of HTTP errors that UDCA encountered. This metric is useful to help determine which part of the UDCA external dependencies are failing and for what reason.

These errors can arise for various services ( getDataLocation

, Cloud storage

, Token generator

) and for various datasets (such as api

and trace

)

with various response codes.

/udca/upstream/http_latencies

service

dataset

The upstream latency of services, in seconds.

Buckets will be 100ms, 250ms, 500ms, 1s, 2s, 4s, 8s, 16s, 32s, and 64s.

Histogram for latency from upstream services.

/udca/upstream/uploaded_file_sizes

dataset

The size of the file being uploaded to the Apigee services, in bytes.

Buckets will be 1KB, 10KB, 100KB, 1MB, 10MB, 100MB, and 1GB.

Histogram for file size by dataset, organization and environment.

/udca/upstream/uploaded_file_count

dataset

Note that:

- The

eventdataset value should keep growing. - The

apidataset value should keep growing if org/env has constant traffic. - The

tracedataset value should increase when you use the Apigee trace tools to debug or inspect your requests.

/udca/disk/used_bytes

dataset

state

The space occupied by the data files on the data collection pod's disk, in bytes.

An increase in this value over time:

-

ready_to_uploadimplies agent is lagging behind. -

failedimplies files are piling up on disk and not being uploaded. This value is computed every 60 seconds.

/udca/server/pruned_file_count

dataset

state

UPLOADED

, FAILED

, or DISCARDED

./udca/server/retry_cache_size

dataset

A count of the number of files, by dataset, that UDCA is retrying to upload.

After 3 retries for each file, UDCA moves the file to the /failed

subdirectory and removes it from this cache. An increase in this value over time implies that the cache is not being cleared, which happens when files are moved to the /failed

subdirectory after 3 retries.

Cassandra metrics

The Prometheus service collects and processes metrics (as described in Metrics collection ) for Cassandra just as it does for other hybrid services.

The following table describes the metrics and labels that Prometheus uses in the Cassandra metrics data. These labels are used in the metrics log entries.

| Metric name (excluding domain) | Label | Use |

|---|---|---|

/cassandra/process_max_fds

|

Maximum number of open file descriptors. | |

/cassandra/process_open_fds

|

Open file descriptors. | |

/cassandra/jvm_memory_pool_bytes_max

|

pool

|

JVM maximum memory usage for the pool. |

/cassandra/jvm_memory_pool_bytes_init

|

pol

|

JVM initial memory usage for the pool. |

/cassandra/jvm_memory_bytes_max

|

area

|

JVM heap maximum memory usage. |

/cassandra/process_cpu_seconds_total

|

User and system CPU time spent in seconds. | |

/cassandra/jvm_memory_bytes_used

|

area

|

JVM heap memory usage. |

/cassandra/compaction_pendingtasks

|

unit

|

Outstanding compactions for Cassandra sstables. See Compaction for more. |

/cassandra/jvm_memory_bytes_init

|

area

|

JVM heap initial memory usage. |

/cassandra/jvm_memory_pool_bytes_used

|

pool

|

JVM pool memory usage. |

/cassandra/jvm_memory_pool_bytes_committed

|

pool

|

JVM pool committed memory usage. |

/cassandra/clientrequest_latency

|

scope

|

Read request latency in the 75th percentile range in microseconds. |

/cassandra/jvm_memory_bytes_committed

|

area

|

JVM heap committed memory usage. |